I recently saw a paper by Travis LaCroix, Fintan Mallory and Sasha Luccioni entitled ‘Strategic polysemy in AI discourse: A philosophical analysis of language, hype, and power’ which immediately attracted my attention as I had worked on ambiguity and polysemy in the distant past. I am now working on metaphors used in and for AI but I hadn’t made the link with ambiguity and polysemy. This paper did! Fantastic. What really pleased me was that it cites a 2001 paper entitled ‘Ambiguities we live by: Towards a pragmatics of polysemy’ that I had written for fun with David D. Clarke. I never expected that paper, written a quarter of a century ago, to be picked up again in the context of generative AI.

In this post I’ll dig a bit into the similarities and differences between the 2026 paper, written in the era of generative AI, and our 2001 paper, written two decades before the advent of generative AI.

Polysemy in the wild and in AI discourse

A polysemous word like ‘cold’ can have multiple meanings, which can lead to ambiguity in language use, as for example when somebody says: ‘I have cold feet’, meaning they are afraid, and being (jokingly) offered a pair of socks.

Based on a collection of this and other examples, we argued in 2001 that ambiguity and polysemy can be actively created and exploited in everyday discourse for communicative, social, and rhetorical purposes, thereby reinforcing the semantic links between the nodes in a network of senses, strengthening social bonds between those who exploit polysemy in conversation, and helping to negotiate junctures between conversational turns. We wanted to make the point that ambiguity and polysemy are more than mere dictionary phenomena or psycholinguistic puzzles that need to be disambiguated, indeed that the standard assumption of ‘disambiguate as fast as possible, aim for one meaning’ is wrong.

LaCroix et al.’s 2026 paper has taken some of our insights into a new domain, namely contemporary AI research and industry, and has given them a darker inflection. They argue that in AI discourse metaphorical or colloquial terms like ‘hallucination’, ‘chain-of-thought’, ‘introspection’, ‘alignment’, and ‘agent’ can be used strategically for deception, hype and the mobilisation of investment and institutional support rather than benign social bonding. They demonstrate that such terms exhibit strategic polysemy, which means they sustain multiple interpretations simultaneously, combining narrow technical definitions with broader anthropomorphic or common-sense associations. This ambiguity or polysemy can be exploited in various ways, for example to deflect epistemic and ethical scrutiny.

Where we celebrated polysemy’s role in keeping language alive and cementing social bonds, LaCroix et al. are concerned with what happens when the social bonds being cemented are between corporations, funders, and regulators and involving asymmetric power relations. As LaCroix points out in a thread on Bluesky: “This ambiguity matters because language shapes public understanding, policy decisions, investment flows, trust in systems, etc.”

Let’s now look at various aspects of our paper in comparison with the LaCroix et al. paper.

Purposive ambiguity and ‘glosslighting’

In our paper we drew on Kittay’s notion of ‘purposive ambiguity’ — the deliberate exploitation of multiple meanings for rhetorical effect, whether in advertising, jokes, puns, humour or irony in ordinary conversation. We noted that this requires skill, and that the rewards can be social, monetary, and aesthetic. As when my husband said, upon seeing a sign at the university for an ‘Origami Society’ meeting: “I thought they had folded years ago!?”… amusement and laughter all round.

LaCroix et al. extend this in a different direction with their central coinage: ‘glosslighting’. This term is a blend or portmanteau word composed of ‘gloss’ referring to language and ‘gaslighting’ referring to manipulating someone into questioning their perception of reality. In the AI context ‘glosslighting’ means using “familiar words in a technical sense in a way that still evokes their everyday meaning, while keeping the option to fall back on the narrower definition when challenged”. Even basic words like ‘think’, ‘learn’ or ‘infer’ oscillate between literal and metaphorical uses in AI discourse and this linguistic ambiguity can be exploited for manipulation, hype and persuasion.

LaCroix et al. identify anthropomorphism as a particular engine driving AI polysemy. Terms like ‘hallucination’, ‘reasoning’, ‘introspection’, and ‘agent’ are not random metaphors; they borrow from the vocabulary of human mental life, giving AI systems the feel of cognition, indeed ‘intelligence’, even when the technical reality is statistical pattern-matching.

This is Kittay’s ‘purposive ambiguity’ operationalised at an institutional scale, with an asymmetric twist: the speaker knows both meanings and exploits the gap, while audiences (policymakers, journalists, the public) are largely unaware of the narrower technical reality hiding behind the evocative surface term.

Polysemy and ‘semantic traps’

In our paper we distinguished between intentional ambiguity (wit, irony, advertising) and unintentional ambiguity. One aspect of unintentional ambiguity is what we called ‘falling into semantic traps.’ The whole idea for the 2001 paper was in fact born from Brigitte falling into a semantic trap.

At the time, Brigitte had a small office at the end of a university corridor. Maintenance workers were laying new carpets throughout, including between the corridor and her office. To do that they had to take the door to Brigitte’s office off its hinges and Brigitte said: “Wait, let me first take my clothes off” (referring to her coat hanging on a hook on the door). Great hilarity ensued and the whole thing became a corridor anecdote for some time.

Interestingly, in contrast to purposive ambiguity, the humour of such semantic trap anecdotes often stems from the speaker being caught out, with the hearer exploiting the unintended double meaning.

LaCroix et al. describe a kind of reversed semantic trap where the audience (policymakers, investors, the public) fall into the trap, while the AI researchers and corporations deploying these terms are in the position of the knowing exploiter.

The term ‘alignment’, for example, evokes moral harmony between AI systems and human values in popular and policy discourse, while technically it is operationalised through reward modelling, preference learning, or constraint satisfaction, all rather technical issues that fall short of capturing the normative richness implied by the term in colloquial use. This gap allows developers to gesture towards ethical assurances while limiting their commitments to specific technical innovations.

Polysemy and social bonding

Our 2001 paper emphasised that ambiguity may help strengthen social bonds between those who share and exploit multiple meanings together. Falling into semantic traps, even when embarrassing, typically leads to joint laughter and connection.

LaCroix et al. point to a more troubling version of this: ‘glosslighting’ fuels hype cycles, garners funding, and shapes public and policy perceptions of AI, while deflecting epistemic and ethical scrutiny. The ‘social bond’ being forged is between AI companies and their investors. This bond is cemented by shared excitement about terms like ‘intelligence’ and ‘alignment’, while those outside the in-group (the public, regulators) are excluded from the knowing wink that these terms carry narrower technical meanings. The benefits of ambiguity accrue almost entirely to the more powerful party.

Conclusion

When social relations between people engaged in a conversation are at least vaguely symmetrical, words with multiple meanings can be used to purposively engage in banter, punning and jokes that lead to joint laughter and social bonding. This an aspect of linguistic and conversational ambiguity and polysemy that we explored in our 2001 paper. When social relations between people engaged in a conversation are asymmetrical, especially when there is a difference in power and status, words with multiple meaning can be used strategically to manipulate and deceive. This is an ethical and epistemic aspect of linguistic ambiguity and polysemy that LaCroix et al. explore in the context of generative AI discourse.

In our 2001 paper we highlighted that polysemy is pragmatically alive, socially functional, and rhetorically powerful. In their 2026 paper LaCroix et al. applied some of our insights to a domain where those properties carry substantial ethical and political weight. They show that what we described as a feature of everyday human linguistic creativity can become, at institutional scale and under conditions of power asymmetry, a mechanism of manipulation and epistemic harm. The benign function of ambiguity is weaponised and the benefits of ambiguity use accrue only to people in power rather than leading to shared social bonding.

This is an entirely new aspect of the pragmatic use of ambiguity and polysemy we had not anticipated in our 2001 paper. We wrote our paper initially as a bit of fun, born from a hilarious encounter between Brigitte and maintenance workers in a university corridor. Transported into the context of AI discourse, our insights have taken on a new and darker meaning.

When ambiguity and polysemy are used for manipulation rather than merriment, it becomes important to think about how this use impacts AI safety and AI ethics. Interestingly, these topics have been explored in a couple of other papers using insights from our paper. I hope to explore this briefly in my next post.

Acknowledgement: Some parts of this post were inspired by my conversation about the two papers with Claude.

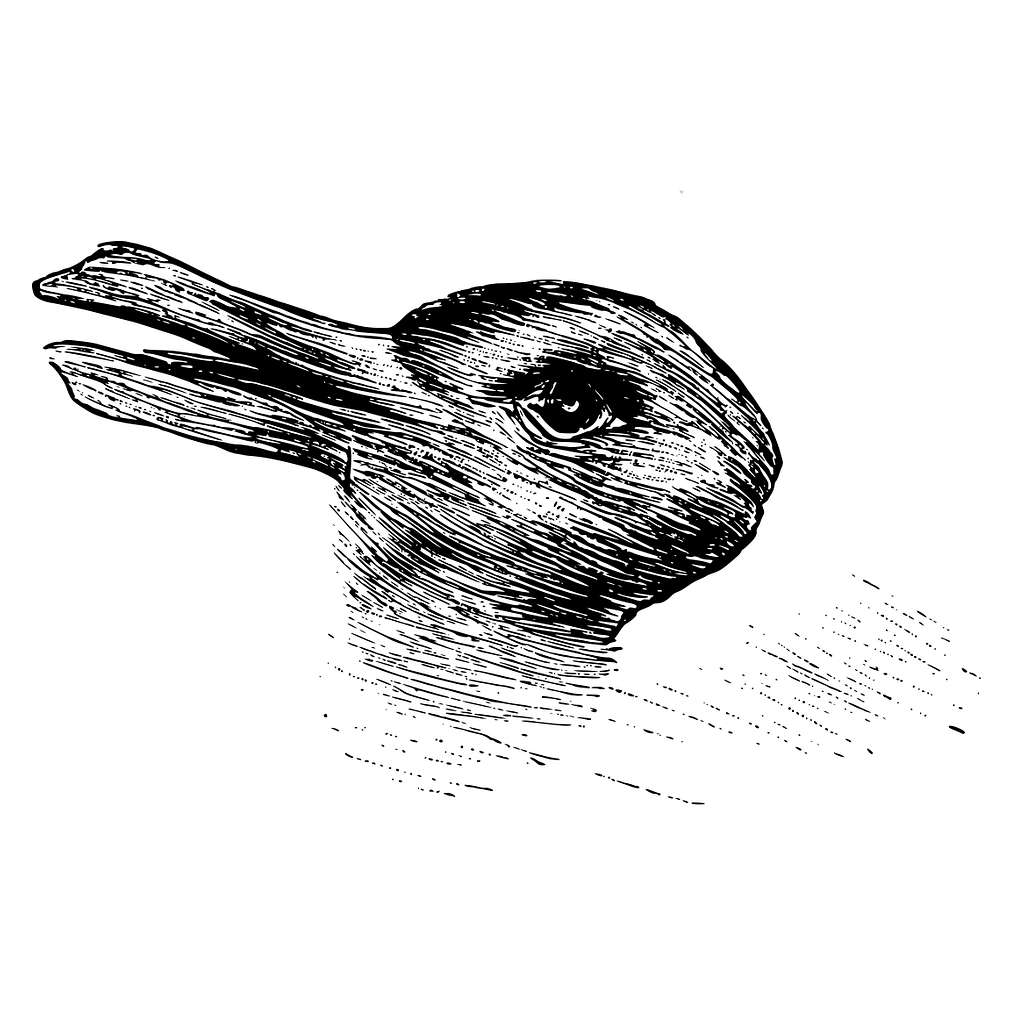

Image: Duck-rabbit illusion “The rabbit–duck illusion is an ambiguous image in which a rabbit or a duck can be seen. The earliest known version is an unattributed drawing from the 23 October 1892 issue of Fliegende Blätter, a German humor magazine. It was captioned “Welche Thiere gleichen einander am meisten?” (“Which animals are most like each other?”), with “Kaninchen und Ente” (“Rabbit and Duck”) written underneath. The image was made famous by Ludwig Wittgenstein, who included it in his Philosophical Investigations as a means of describing two different ways of seeing: seeing that/seeing as.”

Leave a comment